Technology

Fluent.ai is a leader in speech understanding and voice user interface solutions.

How do we do it?

Based on over nine years of research in machine learning and artificial intelligence, and multiple families of issued patents, Fluent.ai’s technology is unique and unmatched.

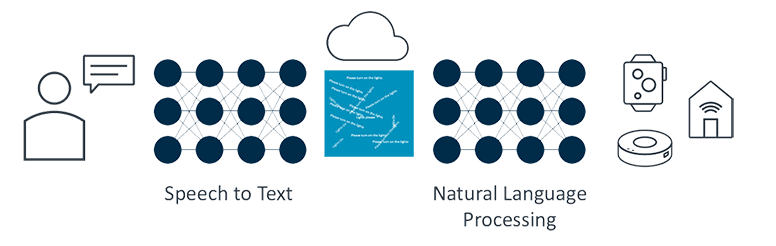

Conventional speech understanding solutions operate in two distinct steps, first interpreting speech into text in a target language and then applying natural language processing to the text to determine the user’s intent. This approach involves large data collection and labeling efforts and requires a large amount of computing power to develop models in a single language. This approach also involves a number of disjointed modules, such as the acoustic model and a language model to map input speech to a string of words. These modules are not optimized together and hence do not provide optimal speech recognition performance. This becomes particularly evident in environments with noise or with variability in speaker accents.

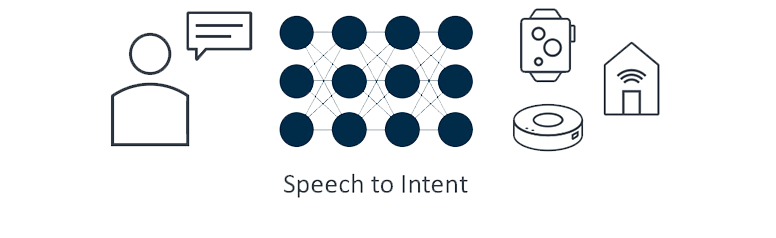

Fluent.ai’s speech-to-intent technology employs unique neural network algorithms to directly map the incoming speech of a user to their intended action without the need to perform speech to text transcription. During training, Fluent.ai technology learns by directly associating semantic representations of a speaker’s intended actions with the spoken utterances. In a way, our models are based on the concept of vocabulary and language acquisition in humans. Unlike conventional automatic speech recognition (ASR), Fluent.ai technology does not require phonetic transcription. Our text-independent approach enables the development of speech understanding models that can learn to recognize a new language from a small amount of data, and allows the end-users to interact with the devices in a language of their choice. The user does not need to conform to any preset phrases and is free to choose words of their preference.

Competitive Advantages

Competitive Advantages

Leading Speech to Text Providers

Speech to Intent

A

B

C

D

Comparison

Accuracy

-

A50%

-

B75%

-

C50%

-

D50%

-

Fluent.ai100%

Noise Robustness

-

A50%

-

B50%

-

C50%

-

D50%

-

Fluent.ai100%

Improvements with User Feedback

-

AN/A

-

BN/A

-

CN/A

-

DN/A

-

Fluent.ai100%

Offline Performance

-

A50%

-

BN/A

-

C50%

-

DN/A

-

Fluent.ai100%

Recognition Speed

-

A25%

-

B50%

-

C50%

-

D25%

-

Fluent.ai100%

Customizability

-

AN/A

-

BN/A

-

CN/A

-

DN/A

-

Fluent.ai100%

Size of Typical Training Data

-

A+10,000 hrs

-

B+10,000 hrs

-

C+10,000 hrs

-

D+10,000 hrs

-

Fluent.ai<10 hrs

Speed to Launch New Languages/ Accents

-

A25%

-

B25%

-

C25%

-

D25%

-

Fluent.ai100%

Ability to Handle Mix of Languages

-

A25%

-

B25%

-

C25%

-

D75%

-

Fluent.ai100%

Partners

Research

Enhance your devices with Fluent.ai's

offline, robust and multilingual voice AI engine